Kinetic Random Access Memory stores data as continuous light waves circulating in fiber optic loops at 200,000 km/s. A new physical substrate for AI inference infrastructure.

The field treats inference expense as a compute efficiency problem. It isn't. Inference is entirely memory-bound.

During decode. Billions in GPU hardware sits idle, waiting for weights to transit the memory bus.

140GB of weights must transit per token for a 70B model. This is the physical ceiling.

Of an H100 SXM module cost. Memory is where the money burns.

At 16K context on a 70B model. At 128K+ tokens, we hit a physical wall.

The compute-memory chasm: stranded capital in inference.

AI's most acute bottleneck is not compute speed; it is the structural gap between GPU compute capability and memory capacity.

One K-RAM unit, integrated in 48 hours, transforms a memory-starved cluster running at 40% utilization into a compute-bound system running at 97% capacity.

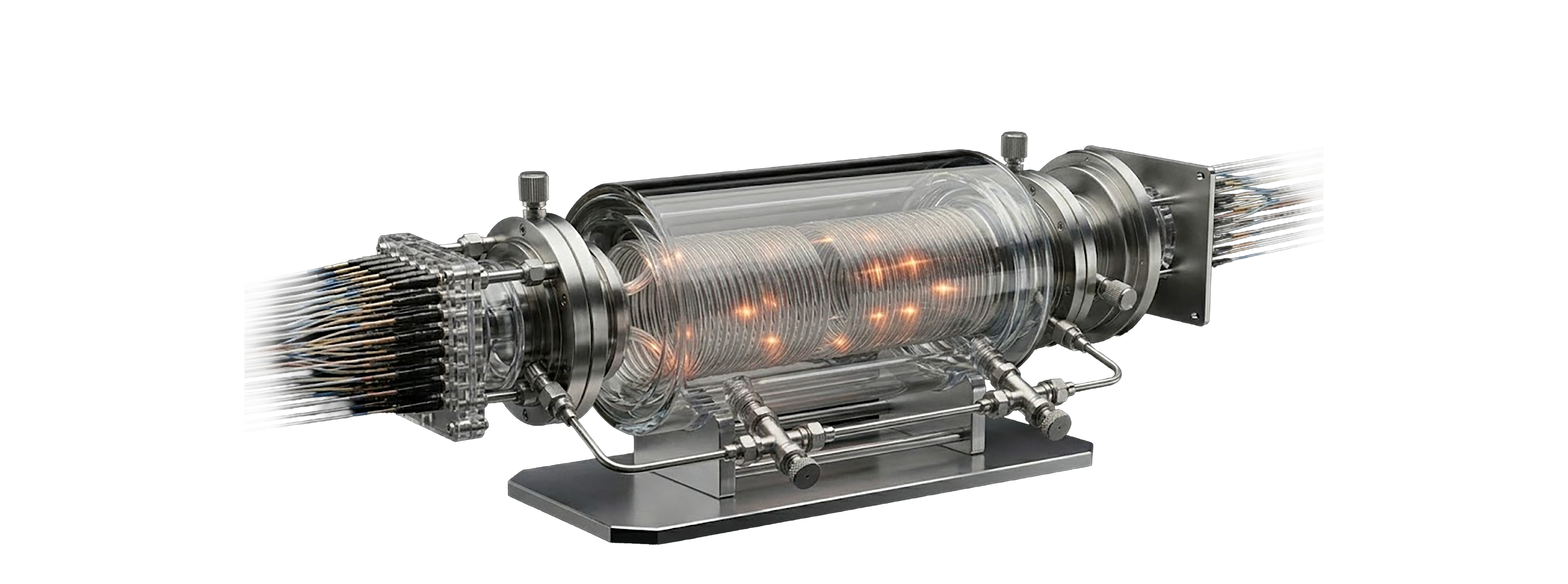

10 km of single-mode fiber coiled inside a double-walled borosilicate glass vessel, thermally controlled to ±0.05°C. Data circulates as light at 204,218 km/s. Round-trip access: 49 µs.

Three-layer proprietary pipeline achieving 5,000× constitutional compression. CFR encodes the signal as a continuous cubic function. TNR stores the generating function as ternary weights. DRC guarantees bit-perfect reconstruction. (CFR + TNR + DRC) = C-REN

Model layers stream on-demand at 49 µs instead of loading the full model into VRAM. GPU memory is freed entirely for activations and batching. Memory-bound inference becomes compute-bound.

The Vessel (borosilicate glass, 10km SMF-28e+ fiber, silicone fluid) + NFR Server (2U) + Thermal Control Unit (2U, PID, ±0.05°C) + Optical Switch (1U, 100-channel DWDM).

Operator: Graeme Harrison, CEO. Real production workload validation on 8x NVIDIA H200s. 1,200% Annual Customer ROI. $35M NPV on a single $1M K-RAM investment.

Big tech capital is aggressively chasing the networking layer. They are entirely ignoring the memory layer. K-RAM occupies the untapped gap.

Frontier model serving at 10–20× throughput. Zero offloading. Solved KV cache. The pilot customer is already waiting.

"Unlimited VRAM" instances. Licensing at $500M/year per hyperscaler. AWS, Azure, GCP want differentiation.

Canadian IP — not subject to US export controls. Cold climate eliminates cooling costs. The Switzerland of AI infrastructure.

15 years of peer-reviewed research proving optical fiber can store information and perform interference-based computation.

Veteran systems architect. Independently reviewed the approach and confirmed the fundamental mathematical insight.

Creators of the SMF-28 fiber. Their glass has carried the internet for 50 years; it is now the physical storage medium for K-RAM.

15 years of peer-reviewed research proving identical fiber delay-line memory physics, DWDM channel separation, and ±0.05°C thermal viability.

The underlying physics are not theoretical. Delay-line optical memory has been independently studied, measured, and published in academic literature for over a decade — well before K-RAM existed as a product. Queen's validates the substrate, not the pitch.

The world's most respected systems programmer publicly derived and validated the uncompressed physical principle of kinetic optical memory.

Independently derived, unprompted, from first principles. He got there on his own. That's the validation that matters.

The core substrate (SMF-28e+ fiber) is a commodity with industrial-scale production, currently supplying Meta's next-gen infrastructure. Zero supply chain risk.

SMF-28e+ is the most produced single-mode fiber on earth. Corning ships it by the million kilometers. K-RAM doesn't invent a new material — it uses the most reliable optical medium ever manufactured, already priced at commodity scale, already inside every major data center on the planet.

210 provisional patents covering system architecture, loop geometry, optical encoding, DWDM configuration, and GPU streaming protocol.

Trade secrets protecting proprietary PHI optimization. Competitors using standard NFR achieve marginal compression; PHI delivers the 20× multiplier.

Genesis Code + Quantum Randomness. The proprietary bootloader generates unique algorithms at runtime. You cannot copy what doesn't statically exist.

Let's get to work.